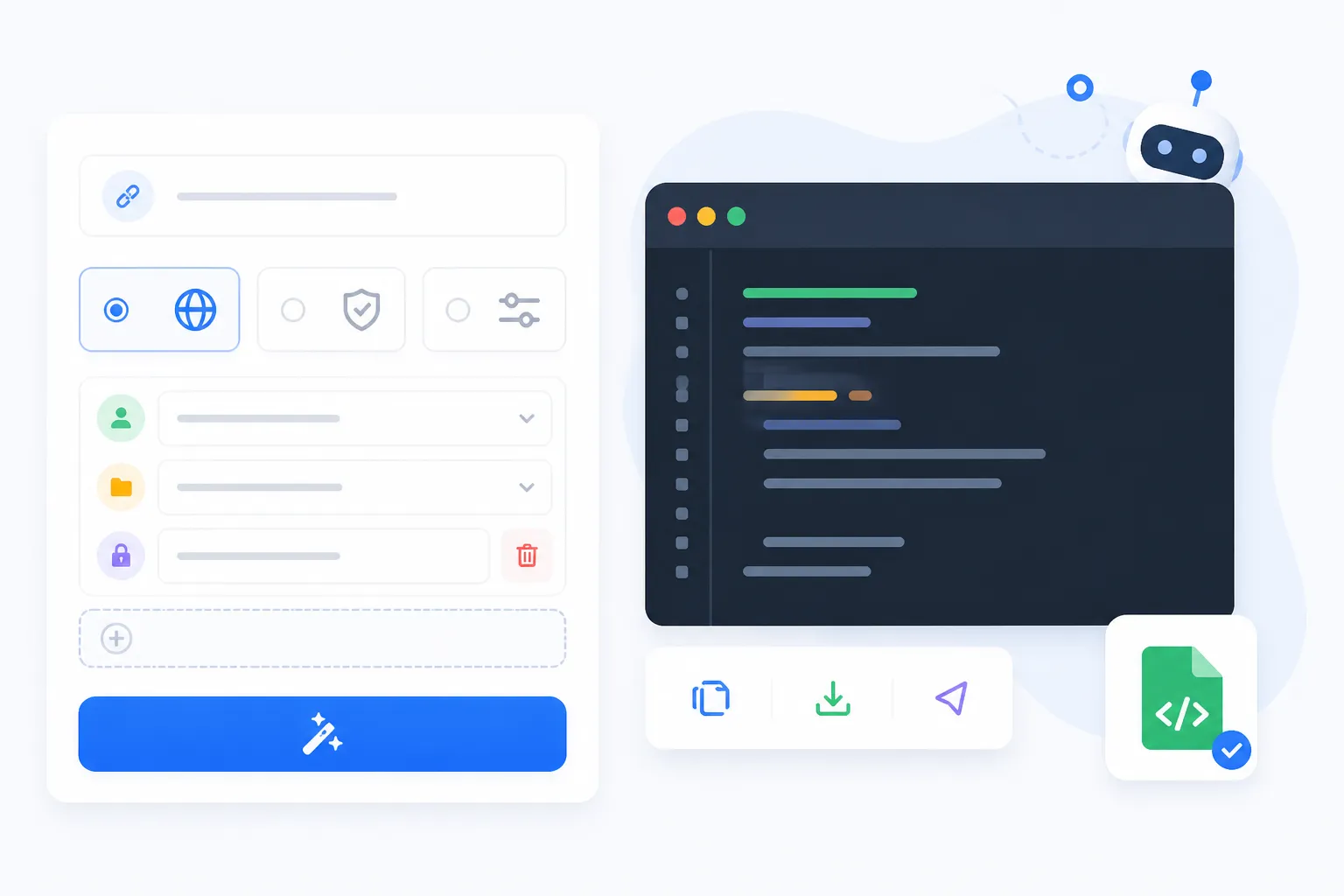

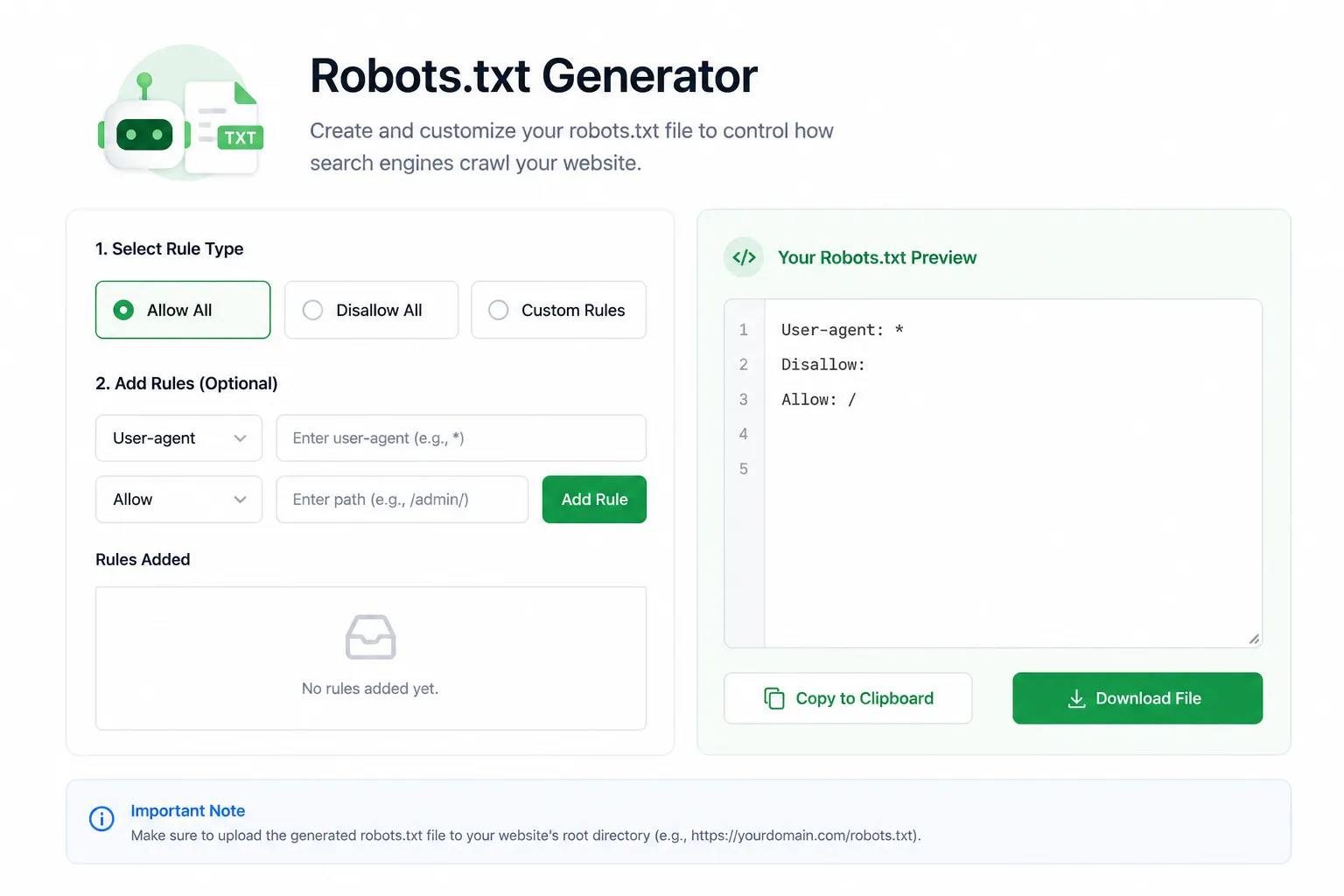

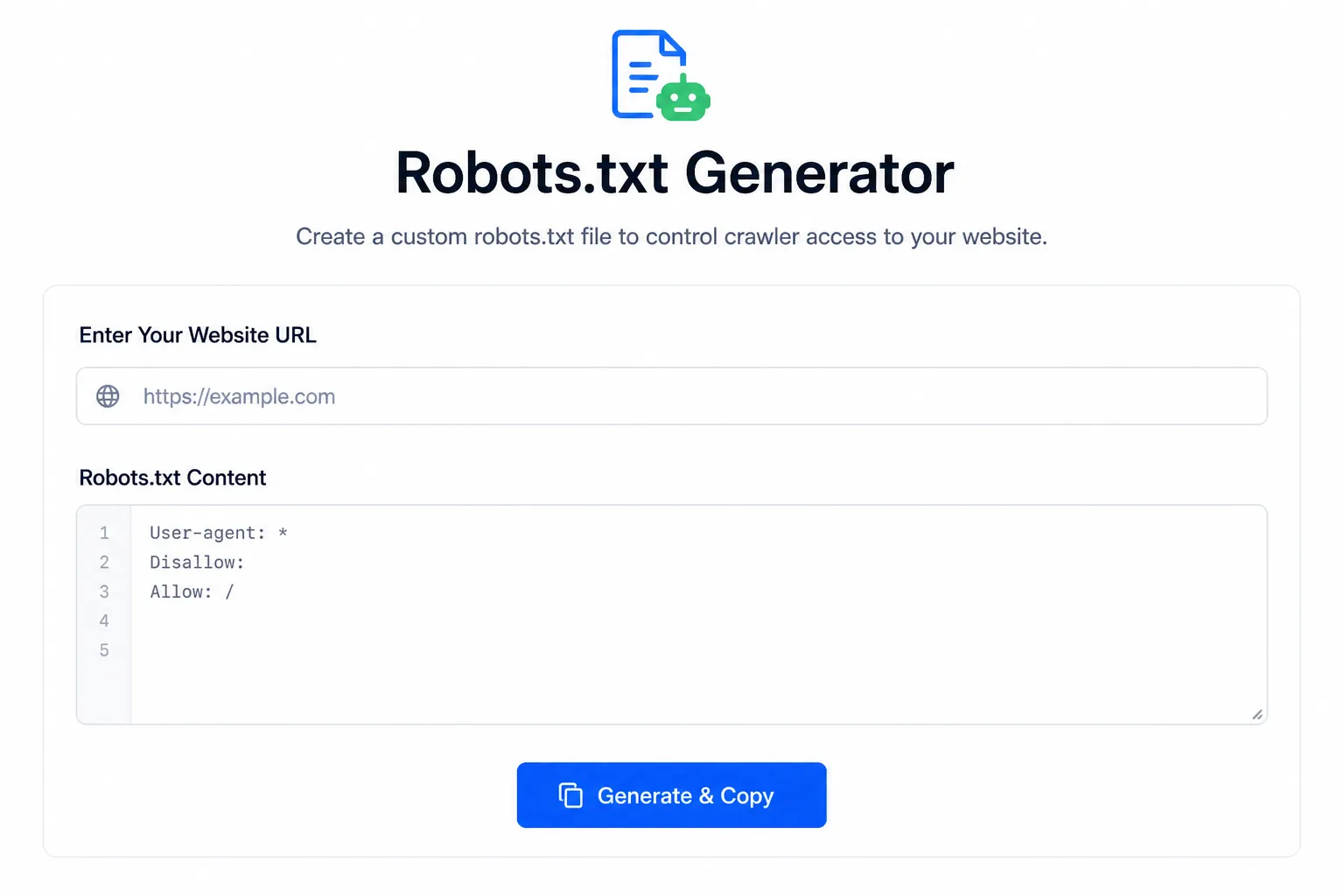

Create a custom robots.txt file to guide search engine crawlers and control which parts of your website should be crawled.

Create an SEO-friendly robots.txt file with Google, Bing, AI bots, sitemap rules, crawl delay, and custom paths.

Robots.txt gives crawling instructions to bots, but it does not protect private data. Do not use it as a security tool.

Generate your robots.txt file instantly — no signup required.

WebTrendSEO’s, Robots.txt Generator helps you create a clean and SEO-friendly robots.txt file for your website. This file tells search engine crawlers which pages or folders they can access and which sections should be blocked.

It is useful for website owners, SEO professionals, developers, and bloggers who want better control over website crawling and indexing.

No signup required — simple and fast.

A properly configured robots.txt file helps search engines understand which parts of your website should be crawled. It can prevent crawlers from wasting time on low-value pages, duplicate URLs, admin areas, and unnecessary files.

While robots.txt does not directly improve rankings, it supports better crawl management and technical SEO.

Create custom robots.txt rules

Control search engine crawler access

Block unnecessary pages from crawling

100% free to use

Help manage crawl budget

No signup required

Incorrect robots.txt rules can block important pages from search engines. Always review your generated file before uploading it to your website.

Avoid blocking important pages, CSS, JavaScript, images, or pages you want Google to index.

Explore answers to common questions about our Robots.txt Generator, portfolio, SEO strategies, and how WebTrendSEO delivers measurable results across Google and AI-driven search platforms.

A robots.txt file is a text file that tells search engine crawlers which pages or folders they can or cannot crawl on your website.

Yes, WebTrendSEO’s Robots.txt Generator is completely free to use.

You should upload the robots.txt file to the root folder of your website.

Not always. Robots.txt controls crawling, not indexing. If a page is already indexed, you may need a noindex tag or removal request.

Yes. If you accidentally block important pages, search engines may not crawl or understand your website properly.

Use our free tools to fix basic SEO issues, or let WebTrendSEO audit and optimize your website for better search visibility.

Fell free get in touch with us via phone or send us a message

phone: +92 3400 644414

email: contact@webtrendseo.com